Difference between revisions of "Correlations"

| Line 26: | Line 26: | ||

Correlations can be applied onto the most diversely originated continuous variables, hence correlations can be applied to data across all spatial and temporal scales. The used data can originate from [[Survey|surveys]], economics, measurements, the industry and many other sources, which is why the method is considered one of the most relevant statistical analysis tools. Since correlations are also used in both inductive and deductive approaches, correlations are among the most abundantly used quantitative method to date. | Correlations can be applied onto the most diversely originated continuous variables, hence correlations can be applied to data across all spatial and temporal scales. The used data can originate from [[Survey|surveys]], economics, measurements, the industry and many other sources, which is why the method is considered one of the most relevant statistical analysis tools. Since correlations are also used in both inductive and deductive approaches, correlations are among the most abundantly used quantitative method to date. | ||

| − | '''There are some core questions related to the application of correlations:''' | + | '''There are some core questions related to the application of correlations:'''<br/> |

1) ''Are relations between two variables positive or negative, and how strong is the estimate of the relation?'' Being taller leads to a significant increase in [https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3534609/ body weight]. Being smaller leads to an overall lower gross calorie demand. The strength of this relation - what statisticians call the ''estimate'' - is an important measure when evaluating correlations and regressions. (A regression implies a causal link between two continuous variables, which makes it different from a correlation, where two variables are related, but not necessarily causally linked.) | 1) ''Are relations between two variables positive or negative, and how strong is the estimate of the relation?'' Being taller leads to a significant increase in [https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3534609/ body weight]. Being smaller leads to an overall lower gross calorie demand. The strength of this relation - what statisticians call the ''estimate'' - is an important measure when evaluating correlations and regressions. (A regression implies a causal link between two continuous variables, which makes it different from a correlation, where two variables are related, but not necessarily causally linked.) | ||

Revision as of 08:30, 10 February 2021

| Method categorization | ||

|---|---|---|

| Quantitative | Qualitative | |

| Inductive | Deductive | |

| Individual | System | Global |

| Past | Present | Future |

In short: A correlation is the statistical relation between two continuous variables.

Background

Karl Pearson is considered to be the founding father of mathematical statistics; hence it is no surprise that one of the centrals methods in statistics - to test the relation between two continuous variables - was invented by him at the brink of the 20th Century (see Karl Pearson's "Notes on regression and inheritance in the case of two parents" from 1895). His contribution was based on work from Francis Galton and Auguste Bravais. With more data becoming available and the need of an “exact science” as part of the industrialization and the rise of modern science, the Pearson correlation paved the road to modern statistics at the beginning of the 20th century. While other approaches such as the t-test or the Analysis of Variance (ANOVA) by Pearsons arch-enemy Fisher demanded an experimental approach, the correlation simply needed continuous data. Hence it appealed to the demand for an analysis which would only need measuring as in engineering or counting as in economics as the basis for simple correlative tests, but one that would not be preoccupied too deeply with the reasoning on why variables correlated. Pearson recognized the predictive power of his discovery, and the correlation remains to be one of the most abundantly used statistical approaches, for instances in economics, ecology, psychology and social sciences.

What the method does

Correlations analyse the relation between two continuous variables, and test whether the relation is statistically significant, typically using a statistical software. Correlations take the sample size as well as the strength of the relation between the two variables into account to derive a testing of the statistical relation. The so-called correlation coefficient indicates the strength of the relation, and ranges from 1 to -1. While values close to 0 indicate a weak correlation, values close to 1 indicate strong positive correlations, and values close to -1 indicates a strong negative correlation.

Correlations can be applied onto the most diversely originated continuous variables, hence correlations can be applied to data across all spatial and temporal scales. The used data can originate from surveys, economics, measurements, the industry and many other sources, which is why the method is considered one of the most relevant statistical analysis tools. Since correlations are also used in both inductive and deductive approaches, correlations are among the most abundantly used quantitative method to date.

There are some core questions related to the application of correlations:

1) Are relations between two variables positive or negative, and how strong is the estimate of the relation? Being taller leads to a significant increase in body weight. Being smaller leads to an overall lower gross calorie demand. The strength of this relation - what statisticians call the estimate - is an important measure when evaluating correlations and regressions. (A regression implies a causal link between two continuous variables, which makes it different from a correlation, where two variables are related, but not necessarily causally linked.)

2) Does the relation show a significantly strong effect , or is it rather weak? In other words, can the regression explain a lot of variance of your data, or is the results rather weak regarding its explanatory power? The [correlation coefficient https://online.stat.psu.edu/stat501/lesson/1/1.6] explains how strong or weak the correlation is and if it is positive or negative. It can be between -1 and +1. The relationship of temperature in Celsius and Fahrenheit for example is pefectly linear, which should not be surprising as we know that Fahrenheit is defined as 32 + 1.8* Celsius. Furthermore we can say that 100% of the variation in temperatures in Fahrenheit is explained by the temperature in Celsius: the correlation coefficient is 1.

3) What does the relation between two variables explain? Correlation can explain a lot of variance for some data, and less variance for other parts of the data. Take the percentage of people working in Agriculture within individual countries. At a low income (<5000 Dollar/year) there is a high variance in between countries: half of the population of the Chad work in agriculture, while in Zimbabwe with a even slightly lower income it is only 10 %. At an income above 15000 Dollar/year, however, there is hardly any variance in the people that work in agriculture: the proportion is always very low. This has reasons, there is probably one or several variables that explain at least partly the high variance within different income segments. Finding such variance that explain partly unexplained variance is a key effort in doing correlation analysis.

Strengths & Challenges

- Correlations test for mere relations, but do not depend on a deductive reasoning. Hence correlations can be powerful both regarding inductive predictions as well as for initial analysis of data without any underlying theoretical foundation. Yet, with the predictive power of correlations comes a great responsibility for the researcher who apply correlations, as it is tempting to infer causality purely from the results of correlations. Economics and other fields have a long history of causal interpretation based on basically inductive correlative results.It can be tempting to assume causality based purely on inductively created correlations, even if there is no logical connection explaining the correlation. For more thoughts on the connection between correlations and causality, have a look at this entry: Causality and correlation.

- Correlations are rather easy to apply, and most software allows to derive simple scatterplots that can then be analyzed using correlations. However, you need some minimal knowledge about data distribution, since for instance the Pearson correlation is based on data that is normally distributed.

- There is an endless debate which correlation coefficient value is high, and which one is low. In other words: how much does a correlation explain, and what is this worth? While this depends widely on the context, it is still remarkable that people keep discussing this. A high relation can be trivial or wrong, while a low relation can be an important scientific result. Most of all, also a lack of a statistical relation between two variables is already a statistical result.

Normativity

- While it is tempting to find causality in correlations, this is potentially difficult, because correlations indicate statistical relations, but not causal explanations, which is a minute difference. Diverse disciplines - among them economics, psychology and ecology - are widely built on correlative analysis, yet do not always urge caution in the interpretation of correlations.

- Another normative problem of correlations is rooted in so called statistical fishing. With more and more data becoming available, there is an increasing chance that certain correlations are just significant by chance, for which there is a corrective procedure available called Bonferroni correction. However, this is hardly applied, and since p-value.driven statistics are increasingly seen critical, the resulting correlations should be seen as an initial form of a mostly inductive analysis, no more, but also not less. With some practice, p-value-driven statistics can be a robust tool to compare statistical relations in continuous data.

Outlook

Correlations are the among the foundational pillars of frequentist statistics. Nonetheless, with science engaging in more complex designs and analysis, correlations will increasingly become less important. As a robust working horse for initial analysis, however, they will remain a good starting point for many datasets. Time will tell whether other approaches - such as Bayesian statistics and machine learning - will ultimately become more abundant. Correlations may benefit from a clear comparison to results based on Bayesian statistics.

Key Publications

Hazewinkel, Michiel, ed. (2001). Correlation (in statistics). Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers.

Further Information

- If you want to practise recognizing whether a correlation is weak or strong I recommend spending some time on this website. There you can guess the correlation coefficients based on graphs: http://guessthecorrelation.com/

- The correlation coefficient: A very detailed and vivid article

- The relationship of temperature in Celsius and Fahrenheit: Several examples of interpreting the correlation coefficient

- Employment in Agriculture: A detailed database

- Kendall's Tau & Spearman's Rank: Two examples for other forms of correlation

- Strength of Correlation Plots: Some examples

- History of antibiotics: An example for findings when using the inductive approach

- Pearson's correlation coefficient: Many examples

- Pearson correlation: A quick explanation

- Pearson's r Correlation: An example calculation

The author of this entry is Henrik von Wehrden.

OLD ENTRY TEXT - DELETE?

Correlations

Propelled through the general development of science during the Enlightenment, numbers started piling up. With more technological possibilities to measure more and more information, and slow to store this information, people started wondering whether these numbers could lead to something. The increasing numbers had diverse sources, some were from science, such as Astronomy or other branches of natural sciences. Other prominent sources of numbers were from engineering, and even other from economics, such as double bookkeeping. It was thanks to the tandem efforts of Adrien-Marie Legendre and Carl Friedrich Gauss that mathematics offered with the methods of least squares the first approach to relate one line of data with another. "" How is one continuous variable related to another? Pandora's box was opened, and questions started to emerge. Economists were the first who utilised regression analysis at a larger scale, relating all sorts of economical and social indicators with each other, building an ever more complex controlling, management and maybe even understanding of statistical relations. The Gross domestic product -or GDP- became for quite some time kind of the favorite toy for many economists, and especially Growth became a core goal of many analysis to inform policy. What people basically did is ask themselves, how one variable is related to another variable.

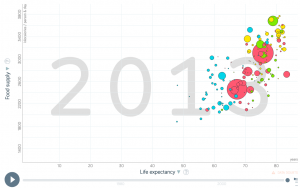

If nutrition of people increases, do they live longer (Yes, see above).

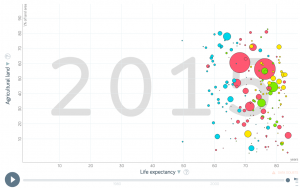

Does a high life expactancy relate to more agricultural land area within a country? (No, like you can see on the left).

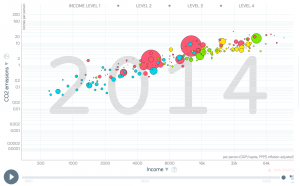

Is a higher income related to more Co2 emissions at a country scale (Yes, see right below).

As these relations started coming in the questions of whether two continuous variables are casually related becoming a nagging thought. With more and more data being available, correlation became a staple of modern statistics. There are some core questions related to the application of correlations.

1) Are relations between two variables positive or negative? Relations between two variables can be positive or negative. Being taller leads to a significant increase in body weight. Being smaller leads to an overall lower gross calorie demand. The strength of this relation -what statisticians call the estimate- is an important measure when evaluating correlations and regressions. Is a relation positive or negative, and how strong is the estimate of the relation?

2) Does the relation show a significantly strong effect, or is it rather weak? In other words, can the regression explain a lot of variance of your data, or is the results rather weak regarding its explanatory power? The correlation coefficient explains how strong or weak the correlation is and if it is positive or negative. It can be between -1 and +1. The relationship of temperature in Celsius and Fahrenheit for example is pefectly linear, which should not be surprising as we know that Fahrenheit is defined as 32 + 1.8* Celsius. Furthermore we can say that 100% of the variation in temperatures in Fahrenheit is explained by the temperature in Celsius.

3) What does the relation between two variables explain? Relation can explain a lot of variance for some data, and less variance for other parts of the data. Take the percentage of people working in Agriculture within individual countries. At a low income (<5000 Dollar/year) there is a high variance. Half of the population of the Chad work in agriculture, while in Zimbabwe with a even slightly lower income its 10 %. At an income above 15000 Dollar/year, there is hardly any variance in the people that work in agriculture within a country. The proportion is very low. This has reasons, there is probably one or several variables that explain at least partly the high variance within different income segments. Finding such variance that explain partly unexplained variance is a key effort in doing correlation analysis.

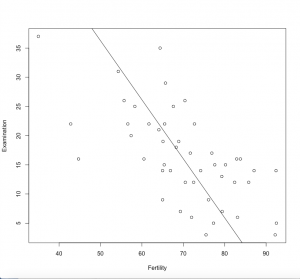

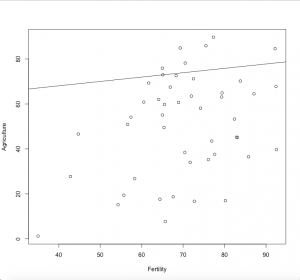

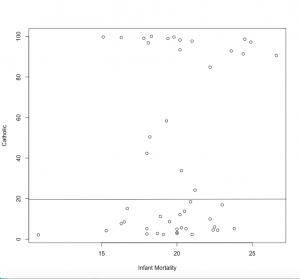

#let's do some correlations with the swiss data set #you find the corresponding plots for the calculations at the right data(swiss) #now we choose two columns and correlate them cor(swiss$Fertility,swiss$Examination) # -0.65 - strong negative correlation plot(swiss$Fertility,swiss$Examination, xlab = "Fertility", ylab = "Examination") #let's try some more cor(swiss$Fertility,swiss$Agriculture) # +0.35 - weak positive correlation plot(swiss$Fertility, swiss$Agriculture,xlab = "Fertility",ylab = "Agriculture") cor(swiss$Infant.Mortality, swiss$Catholic) # +0.17 - very weak positive correlation, not correlated plot(swiss$Infant.Mortality, swiss$Catholic, xlab = "Infant Mortality", ylab = "Catholic")

How do we now calculate a correlation?

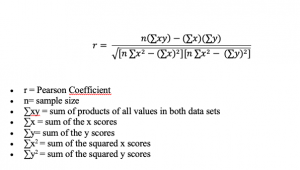

The most abundantly used method of correlation is a Pearson correlation. For sake of simplicity we will build on this one, as it has the normal distribution at it’s heart, or more precisely, Student's t-distribution. Kendall tau and Spearman rho are other forms of distribution, but I recommend you just look them up, and keep as a rule of thumb that Spearman is more robust when it comes to non-normally distributed data.

For people with an affinity to math, the formula for calculation a Person correlation is still tangible. You just need to be aware that you have two variables or samples, called x and y, and their respective means (m). The p-values to determine whether there is a significant relation between x and y can be calculated based on the so called degrees of freedom. These are the sample number minus 2, because there are two variables. In the example of the swiss data these are df=47-2, because we have 47 Kantone in the datasets, and any given correlation would build on 2 variables. By calculating the t-value and setting it in relation to the degrees of freedom, we get the significance level from a t-distribution table. This was an important breakthrough, since we now realise that our sample size, or better the degrees of freedom determine our p-value. A larger sample leads to a smaller p-value, which is no trivial information. In other words, more data leads to a clearer knowledge about whether our hypothesis is confirmed or not. Sample size matters!

In the swiss dataset, we can relate for instance the two variables examination and fertility. Both are fairly normally distributed. If we plot them we see a clear relation. But how is the relation in term of the r-value?

cor(swiss$Fertility,swiss$Examination)

-0.64, so there is certainly some sort of a relation, as we have seen before But is it significant? As a quick check some people tend to favour ggplots.

library("ggpubr")

ggscatter(swiss, x = "Fertility", y = "Examination",

cor.coef = TRUE, cor.method = "pearson")

We can get a clearer calculation using

cor.test(swiss$Fertility,swiss$Examination)

Here, everything is neatly packed together, the t-value, the degrees of freedom, and the p-value. Also, the correlation coefficient is given. We see that there is a significant negative relation between the two variables. Before your brain now starts to interpret this, I urge you to develop a statistical literacy to read such plots. You need to become able to read correlation plots in your sleep, and be very sure about the relation between the variables, how strong it is, whether it is negative or positive, and whether it is significant. This is the bread and butter of any person versatile in statistics.

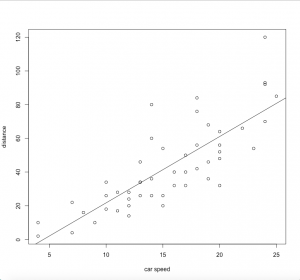

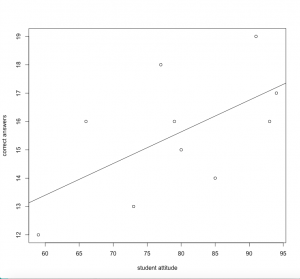

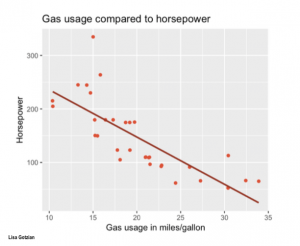

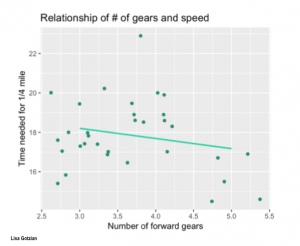

Reading correlation plots

One of the core skills regarding statistics is to quickly make sense out of snapshots of graphics that contain formation on correlations. Seeing a correlation plot and being able to read this plot quickly is the daily job of any data analyst.

There are three questiones that one should ask yourself whenever looking at a correlation plot:

1) How strong is the relation?

2) Is the relation positive or negative?

3) Does the relation change within parts of the data?

Regarding 1), it is good to practise. Once you get an eye for the strength of a correlation, you become really fast in understanding relations in data. This may be your first step towards a rather intuitive understanding of a method. Having this kind of skill is essential for anyone interested in approximating facts through quantitative data. Obviously, the further the points scatter, the less they explain. If the points are distributed like stars in the sky, then the relation is probably not significant. If they show however any kind of relation, it is good to know the strength.

Regarding 2), relations can be positive, or negative (or neutral). The stronger the estimate of a relation is, the more may these relations matters, some may argue. Of course this is not entirely generalisable, but it is definitely true that a neutral relation only tells you, that the relation does not matter. While this is trivial in itself, it is good to get an eye for the strength of estimates, and what they mean for the specific data being analysed. Even weaker relation may give important initial insights. In addition is the normative value of a positive or negative relation having typically strong implications, especially if both directions are theoretically possible. Therefore it is vital to be able to interpret the estimate of a correlation.

Regarding 3, the best advise is to look at the initial scatterplot, but also the residuals. If the scattering of all points is more or less equal across the whole relation, then you may realise that all errors are equally distributed across the relation. In reality, this is often not the case. Instead we often know less about one part of the data, and more about another part of the data. In addition to this we do often have a stronger relation across parts of the dataset, and a weaker understanding across other parts of the dataset. These differences are important, as they hint at underlying influencing variables or factors that we did not understand yet. Becoming versatile in reading scatter plots becomes a key skill here, as it allows you to rack biases and flaws in your dataset and analysis. This is probably the most advanced skill when it comes to reading a correlation plot.

Key messages

• Correlation coefficient ranges from -1 to 1

• Inspect the relation for flaws

• Correlations can be inductive and deductive

Articles

Student's T Distribution: An introduction

Degrees of Freedom: A very explanatory article

Student t Distribution Table: Important link to save!

Reading Scatterplots: Some instructions

The History of Inductive Reasoning: A detailed article

Astronomy: Method of least squares

Natural sciences: Regression toward the mean

Double bookkeeping: An example from economics

Adrien Marie Legendre: The French rival of Carl Friedrich Gauss

Carl Friedrich Gauss: One of the greatest mathematicians of all time

Regression Analysis: The origin lies in economics

Gross domestic product: A detailed article

Body weight: An article on the relationship of body weight and energy intake

The author of this entry is Henrik von Wehrden.