Introduction to Pandas

THIS ARTICLE IS STILL IN EDITING MODE

Pandas is an important Python library for data analysis that was introduced in 2008 by McKinney. The name Pandas comes from "Panel Data", which is an econometrics term for multidimensional structured data sets. The library was originally designed to handle this type of data, especially for financial data analysis, which often requires complex manipulation and cleaning. Nowadays, the library is used in a variety of fields, because of their many useful functions for analyzing, cleaning, inspecting and manipulating data.

Contents

General Idea of Pandas

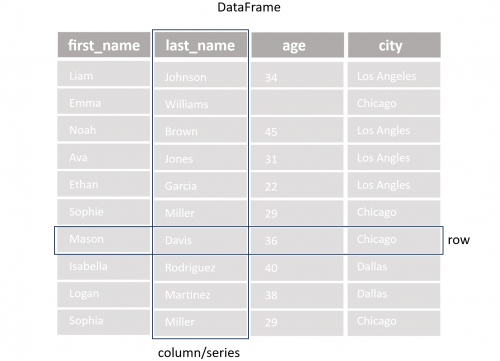

Pandas is developed and optimized for the work with tabular data stored in spreadsheets or databases. For this task two additional data structures are introduced, the Series (one-dimensional array of indexed data) and the DataFrame (two-dimensional array of indexed data). The DataFrame can be seen as a collection of Series, which share the same index. The simple structure of the DataFrame is visualized in the following figure, which shows the data of the file people.csv. This data will be used throughout this introduction to explain the basic functions of Pandas.

Install Pandas

In order to use Pandas, it is necessary to ensure that the library is installed in the Python environment. Otherwise, pip install pandas command in the terminal has to be executed. Afterwards, the library can be important in the document with the following line of code. Furthermore, it is recommended to check the current version of the library.

import pandas as pd

# show pandas version

print(pd.__version__)

Reading and Writing Data

Before any data can be analyzed, it must be read into the current Python environment. Pandas can read a variety of file formats, such as CSV, Excel, JSON, HTML, SQL, etc. The following code gives an example for reading a CSV file. The file is read into a pandas DataFrame, which makes the data accessible for further analysis in Python.

# read csv file

df_csv_people = pd.read_csv('people.csv')

# show the type of df

type(df_csv_people)

If the code is implemented in a final algorithm, it is important to pay attention to the error/exception handling, because there can happen various mistakes during the reading process.

file_path = 'people.csv'

try:

df = pd.read_csv(file_path)

except FileNotFoundError:

print(f"The file {file_path} was not found.")

except pd.errors.EmptyDataError:

print(f"The file {file_path} is empty.")

except pd.errors.ParserError:

print(f"Error parsing the file {file_path}.")

except Exception as e:

print(f"An unexpected error occurred: {e}")

Furthermore, DataFrames can also be created manually with the help of dictionaries in Python.

df_dic_people = {'first_name': ['Jason', 'Molly', 'Tina', 'Jake', 'Amy'],

'last_name': ['Miller', 'Jacobson', 'Ali', 'Milner', 'Cooze'],

'age': [42, 52, 36, 24, 73],

'city': ['Dallas', 'Dallas', 'Los Angles', 'Chicago', 'Chicago']}

This manual creation of DataFrames can be useful to extend a DataFrame from a file by additional values at once.

Note: Since Pandas the append() function was removed. Instead, the concat() function should be used.

# appand one dataframe to another

df_people = pd.concat([df_csv_people, pd.DataFrame(df_dic_people)],ignore_index=True)

When all changes are done, Pandas also provides functions to write the values of the Dataframe back into the old file or into a new one.

# write to csv file with name peoplew.csv

df_people.to_csv('peoplew.csv', index=False)

Getting General Information about DataFrames

The following lines of code give an overview of common functions that offer general information about a Dataframe and are a good starting point for every data inspection.

# prints out the first 5 rows of the dataframe

df_people.head()

# prints out the last 5 rows of the dataframe

df_people.tail()

# summary of the dataframe (columns, data types, memory usage, Non-Null Count etc.)

df_people.info()

# shape of the dataframe (rows, columns)

df_people.shape

# information about the datatype in each column of the dataframe

df_people.dtypes

# statistics summary of the dataframe (count, mean, std, min, 25%, 50%, 75%, max)

df_people.describe()

# get the maximum value of each column

df_people.max()

# get the minimum value of each column

df_people.min()

# returns a boolean series about duplicate rows. True - the row is a duplicate, False - the row is not a duplicate

df_people.duplicated()

Manipulation with DataFrames

Accessing Data

In order to manipulate the data inside a DataFrame it is necessary to access the data. This can be done in the following ways.

# selecting a single column

df_people['first_name']

# selecting multiple columns

df_people[['first_name', 'last_name']]

# selecting rows by index

# selection of the first row

df_people.iloc[0]

# selection of specific values by row and column

df_people.iloc[0, 0]

# selecting rows by index range

# selection of rows 0, 1, 2 (row 3 is not selected)

df_people.iloc[0:3]

# boolean selection

# selection of the row with the first name 'Tina'

df_people[df_people['first_name'] == 'Tina']

# selection by condition

# selection of rows where the age is greater than 50

df_people[df_people['age'] > 50]

The accessibility of a DataFrame can be improved by adding an index to the DataFrame if it does not already exist. This can be a simple range index from 0 to n or an own customized index for more specific needs.

# creating a simple range index

df_people.index = pd.RangeIndex(start=0, stop=len(df_people), step=1)

# accessing data via the index

df_people.loc[3]

# creating a customized index

df_people.index = ['a','b','c','d','e','f','g','h','i','j','k','l','m','n','o']

# accessing data via the index

df_people.loc['c']

Changing Data

Now that we know how to access data, the data can also be modified. This is done like with other datatypes in Python with the `=` operator.

# change the age of the person with the first name 'Tina' to 60

df_people.loc[df_people['first_name'] == 'Tina', 'age'] = 60

A very important function for changing all values in a column at once is the apply() function. It is commonly used to apply a function along a whole axis of a DataFrame. This function is often implemented together with a lambda function, which is a short, anonymous function that is only used once and is not saved in the memory. It is introduced with the lambda keyword. This build-in function should be always preferred over a loop in Python because it is much faster and more efficient.

# change the spelling of the first name to uppercase in all rows

df_people['first_name'] = df_people['first_name'].apply(lambda x: x.upper())

In many situations, it is also useful to replace missing values or undefined values in the DataFrame. These values are often represented by NaN (not a number). They can be replaced with the fillna function by the mode, mean or median column or any other appropriate number.

# calculating the mean, median and mode

mode_age =df_people["age"].mode()

median_age = df_people["age"].median()

mean_age = df_people["age"].mean()

# replace missing values with the mode

df["age"].fillna(median_age, inplace = True)

Rearranging and Grouping Data

Data is often not provided in a structured way, which makes it necessary to rearrange the data to get a better overview. This can be done by sorting and grouping the data.

# sort by a single value (ascending)

df_people.sort_values(by='age')

# sort by a single value (descending)

df_people.sort_values(by='age', ascending=False)

# sort by multiple values (first name and last name)

df_people.sort_values(by=['first_name', 'last_name'])

# reorder the columns by a specific frequency

df_people_reorder = df_people[['last_name','first_name', 'city', 'age']]

The groupby() function splits the data into groups based on defined criteria. This is helpful for group-dependent analysis, such as in the following example about the calculation of the average age in each city.

# caclaulte mean age by city

df_people.groupby('city')['age'].mean()

Deleting Data

The deletion of data plays an important role in data analysis for getting rid of unnecessary or inaccurate data. The following examples in the code give an overview of common delete functions.

# deleting a specific row: axis=0

df_people.drop('a', axis=0)

# deleting a row by index

df_people.drop(index=['b','c'], inplace=True)

# deleting a row by condition

df_people_copy = df_people[df_people['age'] > 50]

# deleting a column

df_people_copy = df_people.drop(columns=['age'])

# deleting multiple columns

df_people_copy = df_people.drop(columns=['age', 'city'])

There is a debate about the usage of the inplace=True parameter, which can be set in many functions to change the data directly in the DataFrame. On the one hand, this way is more convenient, because there is no necessity to create an additional copy. On the other hand, it has some disadvantages, for example, not all functions support this parameter, such as concat(). Furthermore, the parameter prevents building a chain of methods, which is shown in the following code.

result = (df_people

.drop(columns=['age', 'city']) # delete columns age and city

.sort_values(by=['first_name', 'last_name']) # sort by first_name and last_name

.reset_index(drop=True) # reset the index

)

As already mentioned, Pandas includes functions for handling rows and columns with missing or undefined values.

Instead of replacing the values with fillna, it is also possible to drop the rows or columns with the dropna. The final decision about filling or dropping these rows depends on the dataset and personal preferences.

Like in many other functions the dropna function supports the axis parameter, which defines, if a function is applied to the rows or columns.

Further functions, which support the axis parameter are sum(), mean(), min(), max(), count(), median(), and apply() to name only a few.

# deleting row with NaN values

df_people_copy = df_people.dropna()

# deleting columns with NaN values

# axis=1 means columns

# axis=0 means rows

df_people_copy = df_people.dropna(axis=1)

# deleting row with NaN values in a specific column

df_people_copy = df_people.dropna(subset=['age'])

Besides, NaN rows or duplicated rows occur often in datasets. These rows can be removed with the drop_duplicates function.

# delete duplicate rows

df_people_copy = df_people.drop_duplicates()

After deletion of data, it is often necessary to reset the index, because it is not adjusted automatically during the deletion process.

# reset the index of the dataframe

# drop=True: the old index is replaced by the new one

df_people_copy = df_people.reset_index(drop=True)

References

1. Pandas. (n.d.). Getting started with pandas. Retrieved from https://pandas.pydata.org/docs/getting_started/

2. W3Schools. (n.d.). Pandas Tutorial. Retrieved from https://www.w3schools.com/python/pandas/default.asp

3. DataCamp. (n.d.). Pandas Tutorial: DataFrames in Python. Retrieved from https://www.datacamp.com/tutorial/pandas

4. Real Python. (n.d.). Pandas DataFrames: Data Analysis with Python. Retrieved from https://realpython.com/pandas-dataframe/

The author of this entry is Felix Pappe.